Solving the mystery of named resource management in life sciences project management for organizational effectiveness

Solving the mystery of named resource management in life sciences project management for organizational effectiveness

Assigning specific individuals to forecasted work or named resources improves operational efficiency, workforce engagement, and resource alignment. Learn how a mature, data-driven approach using purpose-built frameworks like Alloc8 can elevate project delivery, inform better resource planning and allocation.

Practical Insights: Real-world case studies highlight how life sciences organizations have scaled named resources maturity with enhanced resource visibility and planning.

Best Practices: Structured, transparent resource management practices supported by Alloc8, help align with strategic priorities.

Data-driven Impact: Derive granular insights from real-time data on named resources with custom and flexible frameworks like Alloc8.

Unlock your free copy

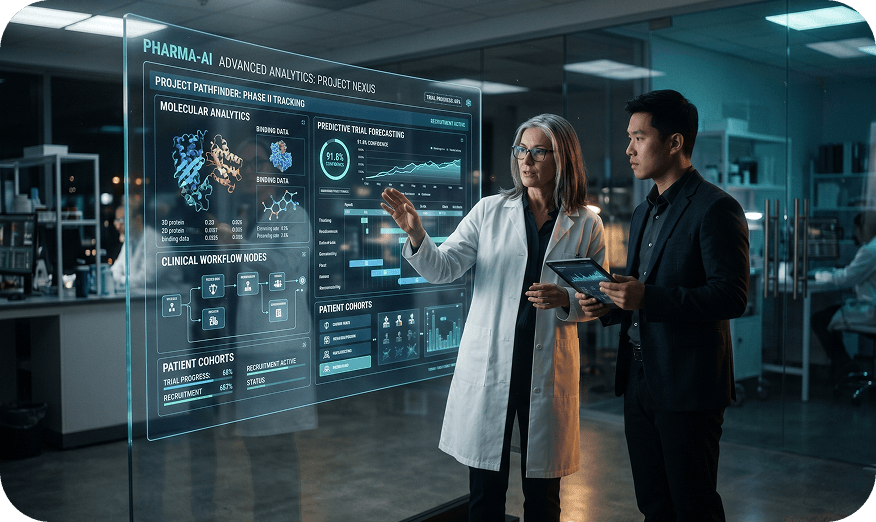

6 steps to create an effective project tracking dashboard in Pharma with AI

AI-ready project tracking dashboards are the future, and global pharma R&D companies are already adapting. Here’s why: complex clinical programs, distributed teams and increasing regulatory scrutiny demand pharma R&D execs and PMO leaders to use AI-enabled dashboards with real-time insights, predictive risk signals and inspection-ready reporting. In this blog, we’ll discuss how to design such advanced AI-powered systems that connect strategy, execution and compliance in a centralized trusted view.What is a project tracking dashboard in pharma?It refers to a centralized visual interface that brings together all scattered data from Project Portfolio Management (PPM) platforms, Clinical Trial Management Systems (CTMS), finance systems and resource management tools.It gives real-time visibility into timelines, budgets, resource allocation, milestones, risks and portfolio health throughout all drug development programs. Instead of static reports, executives can use these to access dynamic, role-based views aligned to portfolio, program or study-level objectives.When augmented with AI, these dashboards go beyond descriptive reporting by introducing:Predictive analytics to forecast cycle times, enrolment performance or cost overrunsAnomaly detection to flag data quality or site performance issuesAutomated alerts that surface emerging risks before they impact milestonesThis shift takes ordinary dashboards and transforms them from being reporting tools into full-fledged decision intelligence platforms.Why AI-powered project dashboards are now critical for global pharma R&DAI is rapidly becoming embedded across pharmaceutical innovation and clinical development. It is already transforming drug development: including clinical trial design, recruitment, and retention through advanced analytics. Parallelly, AI/ML techniques are enabling smart monitoring of clinical data quality and trial site performance using predictive analytics and real-time visualization For R&D leaders, this evolution creates urgency. It means that the dashboards they use also must now reflect AI-driven operational intelligence, instead of just metrics.Key drivers include:Increasing complexity of global, multi-arm and decentralized clinical trialsHeightened regulatory expectations for continuous oversight and data integrityDemand for real-time portfolio rebalancing amid budget and pipeline pressuresAI-enabled project portfolio dashboards designed by i2e experts address these drivers by embedding predictive risk flags, recruitment and cycle-time forecasts and continuous data quality monitoring directly into executive workflows. Instead of reacting to lagging indicators, we help leadership teams now gain forward-looking insights that they need most.Key benefits of an effective project tracking dashboard with AI in pharmaAn effective AI-powered dashboard delivers measurable business impact, both in your R&D and clinical operations. Here are some:Executive decision acceleration: Predictive analytics model schedule slippage and budget variance before thresholds are breached, enabling faster go or no-go decisions at governance forumsProactive risk management: Pattern recognition algorithms detect site-level underperformance or data anomalies early, reducing downstream remediation costsResource optimization: AI-driven capacity forecasting aligns functional resources to portfolio demand, minimizing over-allocation and idle timePortfolio scenario modelling: Advanced analytics simulate pipeline trade-offs under different funding or headcount scenarios, improving capital allocation disciplineInspection readiness: Automated data quality checks and audit trails support inspection-ready reporting, reducing reliance on manual reconciliationsCollectively, these project management dashboard capabilities shift PMOs transcend report generation and become strategic enablers. The AI layer is what converts raw data into prioritized actions.Design and implementation challenges in pharma project dashboards with AIWhile the value is understood by now, pharma-grade AI dashboards still introduce unique challenges that must be managed deliberately. Some of these challenges include:Data integration and harmonization: AI models require clean, standardized data from validated source systems. Mitigation includes establishing a governed data model, controlled interfaces and robust data quality rules before model training.Model validation under GxP expectations: Predictive models influencing regulated decisions may require documented validation, version control and traceability. Companies should implement model documentation standards and structured validation protocols aligned to Quality Management Systems.Explainability and trust: Executives and clinical teams must understand why a model flags risk. Techniques like feature importance reporting and transparent scoring logic improve adoption.Bias and data drift: Historical trial data may embed biases or become outdated. Ongoing monitoring for performance degradation, supported by MLOps practices, reduces this risk.Governance and role clarity: Clearly understood ownership for data stewardship, model monitoring and dashboard updates prevents fragmentation.Change management: Transitioning from static reporting to AI-driven insights requires stakeholder education and alignment on new decision workflows.Without these safeguards, AI dashboards for pharma projects risk becoming technically sophisticated but operationally underutilized.Six steps to design a pharma project tracking dashboard with AIHaving a structured framework ensures that AI integration enhances, rather than complicates, portfolio oversight. Here are six steps to follow while designing one:1. Define business questions and pharma project KPIsFirst things first: start with executive-level decisions the dashboard must support.Clarify governance milestones, investment thresholds, and risk tolerance levelsDefine standardized Key Performance Indicators (KPIs) across development phasesAlign metrics with corporate strategy and portfolio objectivesThe goal is to ensure the AI models are tied to well-defined decisions, not exploratory experimentation.2. Map and prepare data from validated source systemsIdentify and document all required data flows. This means:Integrate data from PPM platforms, CTMS, finance and resource toolsEstablish harmonized data definitions and transformation logicImplement automated data quality checks before AI model consumptionAt i2e, we believe that data readiness is the foundation of credible predictive analytics.3. Design role-based, inspection-ready viewsThe project tracking dashboard in pharma must reflect how stakeholders operate. Your priority should be to:Create executive portfolio views with aggregated risk and financial indicatorsProvide program-level drilldowns for study managers and functional leadsEnsure traceability and audit trails for inspection readinessInspection-ready design should be embedded from the very beginning, not retrofitted later.4. Incorporate AI-driven analytics and predictive modellingRemember that this alone is the key differentiator in a modern project tracking dashboard in pharma with AI. To make sure, you should:Develop predictive models for enrolment, cycle time and cost varianceImplement anomaly detection for site performance and data quality signalsConfigure automated alerts and risk scoring aligned to governance thresholdsAI outputs must be validated, documented and continuously monitored by experts to maintain trust and compliance.5. Build intuitive visualizations that surface risk and performanceInsight must be immediately interpretable. In this case, you can:Use color-coded risk indicators and trend lines for forward-looking metricsPrioritize exception-based reporting over static summariesEnable drill-through from portfolio to study-level detailVisualization design makes things easier by reducing cognitive load and focusing the user’s attention on taking action.6. Establish governance, monitoring, and continuous improvementLast but not least, sustainable impact requires ongoing oversight of both dashboards and AI models. This includes:Defining ownership for data, models, and reporting logicImplementing performance monitoring for predictive accuracy and data driftPeriodically reassessing KPIs and models as portfolio strategy evolvesThis step is your final one, and it ensures the project portfolio dashboard remains aligned with changing regulatory and business landscapes.The i2e point of view: building pharma-grade project tracking dashboards with AIAt i2e Consulting, our experts approach project tracking dashboards in pharma through an AI-first, life sciences-focused lens. We integrate validated PPM platforms with advanced analytics to create connected ecosystems that link strategy, execution, and compliance.As an AI-first partner operating across clinical and PPM, we bridge vision and execution. If you are evaluating how to implement or modernize a project tracking dashboard in pharma with AI, connect with our experts to assess your current maturity and define a roadmap aligned to your portfolio strategy.Frequently asked questions .faq-wrapper { max-width: 850px; margin: 20px auto; font-family: 'Open Sans', sans-serif; } .faq-item { border-bottom: 1px solid #e0e0e0; padding: 10px 0; } .faq-item summary { font-family: 'Montserrat', sans-serif; font-size: 18px; font-weight: 600; cursor: pointer; list-style: none; position: relative; padding-right: 30px; } /* Remove default marker */ .faq-item summary::-webkit-details-marker { display: none; } /* Down arrow (closed state) */ .faq-item summary::after { content: "▼"; position: absolute; right: 0; top: 0; font-size: 16px; transition: transform 0.3s ease; } /* Up arrow (open state) */ .faq-item[open] summary::after { content: "▲"; } .faq-item p { margin-top: 12px; font-family: 'Open Sans', sans-serif; font-size: 17px; line-height: 1.7; color: #272727; } 1. What is an AI-enabled project tracking dashboard in pharma? An AI-enabled project tracking dashboard in pharma is a centralized reporting and analytics platform that integrates PPM, CTMS, finance and resource data and uses predictive analytics and anomaly detection to provide real-time insights. 2. How does AI improve project success in pharma projects? AI improves project success by forecasting risks such as enrolment delays or budget overruns, detecting data quality issues early and generating automated alerts that support proactive decision-making. 3. Is AI in pharma project dashboards compliant with regulations? AI can be compliant when models are validated, documented and monitored under appropriate governance frameworks. Organizations must ensure traceability, explainability and data integrity aligned to regulatory expectations. 4. How do pharma teams get started with AI-enabled dashboards? Teams should begin by defining business questions and KPIs, assessing data readiness and engaging experienced partners to design validated architectures that integrate predictive analytics with inspection-ready reporting.

Rethinking clinical data integration: From data availability to data usability

Why the "last mile" of clinical data preparation is where most programs stall - and where the greatest leverage exists.Most clinical organizations believe they have a clinical data integration problem because their data is fragmented, they have many vendors or their studies are complex.This belief is outdated.In reality, many modern clinical programs – particularly at mid-to-large sponsors, already have centralized platforms, data lakes and automated pipelines. Data does arrive. Data is stored. Dashboards exist.And yet, clinical teams still spend days preparing data before they can analyze it - highlighting a gap between traditional data management in clinical trials and the needs of downstream analytics and monitoring. This is the paradox of modern clinical data integration: the data is available, but it is not usable. Figure 1: Modern clinical programs have centralized data, but manual preparation still sits between data access and analysis.The industry’s blind spot: When “integrated” still means “manual”Over the last decade, the industry has made massive investments in:EDC standardizationsCentral data platformsModern data lakes and warehousesAnalytics and monitoring toolsThese investments solved an important problem: data availability.But they quietly introduced a new one.Clinical data integration is often declared “done” once data lands in a central repository.From that point on, preparation is pushed downstream to monitors, analysts and study teams.That is where things start to break. Preparation logic lives in personal scripts or spreadsheets. Outputs vary between users and runs. Knowledge stays tribal - locked in individual workflows rather than encoded as reusable assets. Handoffs are slow and fragile, and at scale, this doesn't just slow teams down - it erodes trust in the data itself.Clinical data integration is not complete when data is centralized. It is complete when data can be repeatedly used without re-engineering.This reframes integration from a platform problem to a capability problem.The real question becomes:Can different users get the same answer from the same data?Can analysis be rerun without rethinking logic?Can insights be generated without heroics?If the answer is no, integration investment hasn't yet delivered its full return.Why Data lakes and warehouses cannot solve this aloneThis is where many programs get stuck.Data lakes are excellent at absorbing variability. Data warehouses are excellent at delivering consistency.But neither guarantees usability.In practice:Data lakes hold integrated data, but 'integrated' at the lake level often means co-located, not harmonised - still too raw for operational use. For instance, EDC, lab and vendor datasets may sit side by side in the lake but still require reconciliation of subject identifiers, visit schedules and event timestamps before they can support KRIs, patient profiles or monitoring dashboards.Data warehouses expose metrics but hide preparation logic.The “last mile” of integration - the step that makes data analysis-ready - is often left undefined.This gap is where clinical teams feel the pain most acutely.And it’s also where the most leverage exists.The missing layer: Integration for use, not just storageHigh-performing clinical programs design one additional layer into their integration strategy:A reusable, standardized data preparation layer aligned to how clinical teams actually work.This layer:Sits between the lake and downstream toolsEncodes preparation logic once, not repeatedlyProduces deterministic, repeatable outputsTreats preparation as an asset, not an activity Figure 2: Data lakes and warehouses centralize data, but the preparation layer required for operational use is often undefined.This is where data integrity by design becomes real - not as a compliance slogan, but as an engineering discipline. When preparation logic is encoded, versioned and deterministic, traceability and reproducibility become inherent properties of the output, not afterthoughts bolted on during inspection readiness.A real-world story: When the data lake isn’t the problemWe saw this play out clearly in a large global Phase III oncology program, spanning 40+ countries, hundreds of sites, and data flowing from multiple vendors including EDC, laboratories, safety systems, and imaging providers.On paper, the setup looked strong:Data from EDC, audit trails and vendors was centralized A modern data lake existedCentral monitoring tools were in placeIn practice, Central Monitors still spent days preparing data before each analysis cycle.To run specialized KRIs - risk indicators tailored to study-specific safety or conduct signals, they had to:Pull data from multiple sourcesApply study-specific logicAssemble tool-ready datasets manuallyAs a result, outputs varied between analysis cycles, making coverage handovers difficult and limiting reproducibility.The issue was not the availability of data; it was the absence of integration designed for operational use.What changed when integration was treated as a productInstead of adding more tools, the approach shifted fundamentally.The focus moved from:“How do we get data into the lake?” to “How do we make the same analysis effortless every time?”That led to a deliberate shift: treating data preparation as a shared, governed product rather than a distributed, ad hoc activity.:Centralizing and standardizing preparation logicBuilding reusable data flows aligned to monitoring needsDelivering pre-prepared, analysis-ready datasets on demandEliminating monitor-specific data handlingPreparation stopped being an individual task and became a shared capability. Figure 3: When preparation logic becomes reusable, analysis cycles become repeatable.The outcome: Speed, consistency, and confidenceThe impact was not theoretical.Preparation time dropped from multiple days to a few hours for most analysis cycles, and to minutes for recurring standardized outputs. Time to generate analysis outputs reduced by ~30%Variability between runs disappearedConfidence in risk signals improvedTeams focused on interpretation, not assemblyMonitors were able to redirect time from data assembly toward interpretation and escalation - the activities that actually reduce trial risk.Most importantly, integration became invisible - which is exactly when it’s working.What this teaches the industryThis experience highlights an important shift in how clinical data integration should be approached.For years, integration strategies have focused on bringing data together - centralizing it in lakes, warehouses, and analytics platforms. Those investments were necessary, but they solved only part of the problem.The next challenge is operational: turning integrated data into something teams can reliably use without rebuilding preparation logic every time.This requires a change in how integration pipelines are designed:Integration pipelines must encode preparation logic, not just data movementAnalysis-ready datasets should be treated as reusable assets across monitoring cycles and studiesPreparation workflows should be deterministic, traceable, and reproducible by designWhen this layer is missing, organizations often end up with modern platforms on paper but persistent manual work in practice.When it exists, integration becomes far less visible - because the data simply works.The most mature organizations are no longer asking where data lives. They are asking how easily it can be reused - and increasingly, how quickly that reuse can be extended to new studies, new indications, and new regulatory expectations.Where i2e fits and why this mattersAt i2e, we approach clinical data integration differently:We design integration around clinical workflows and not generic schemas, aligning data preparation to how central monitors, study teams, and analysts actually generate insights. We treat data preparation as a reusable product, encoding preparation logic into governed pipelines that can be reused across studies and monitoring cycles. We engineer data integrity into the preparation layer itself - so consistency, traceability, and reproducibility are properties of the output, not controls applied after the fact. We operationalize the “last mile” of integration, creating deterministic, analysis-ready datasets that downstream tools can consume without additional manipulation.We focus on the space between platforms - where most friction lives, connecting data lakes, operational systems, and analytics tools through reusable integration patterns.We work alongside clinical teams - not above them, because the people closest to the data understand the workflows that integration must serve.Final ThoughtThe measure of clinical data integration is not whether data has been centralized - it's whether clinical teams can reuse that data consistently, confidently, and without rework., a capability that is becoming central to modern clinical data management.As clinical programs become more complex, the organizations that invest in usability, standardization, and integrity by design will move faster - not because they process more data, but because they remove friction from how data is used.Increasingly, this is what modern clinical architectures must enable: data that is not only integrated, but operationally reusable across studies, monitoring cycles, analytics workflows and regulatory expectations.The next phase of clinical data integration will therefore not be defined by larger platforms or more pipelines, but by systems designed so that insight generation becomes routine rather than engineered each time.Frequently Asked Questions (FAQs) .faq-wrapper { max-width: 850px; margin: 20px auto; font-family: 'Open Sans', sans-serif; } .faq-item { border-bottom: 1px solid #e0e0e0; padding: 10px 0; } .faq-item summary { font-family: 'Montserrat', sans-serif; font-size: 18px; font-weight: 600; cursor: pointer; list-style: none; position: relative; padding-right: 30px; } .faq-item summary::-webkit-details-marker { display: none; } .faq-item summary::after { content: "▼"; position: absolute; right: 0; top: 0; font-size: 16px; transition: transform 0.3s ease; } .faq-item[open] summary::after { content: "▲"; } .faq-item p { margin-top: 12px; font-size: 17px; line-height: 1.7; color: #272727; } .faq-item ul { margin-top: 10px; padding-left: 20px; } .faq-item ul li::marker { color: #008bff; } .faq-item ul li { color: #272727; font-size: 16px; margin-bottom: 6px; } 1. What is the “last mile” in clinical data integration? The last mile refers to the final steps required to make integrated data usable, including validation, transformation, reconciliation, and structuring for downstream systems. 2. Why is data usability more important than integration? Because integrated data that cannot be easily used still requires manual effort, delaying insights and decision-making. 3. What are common challenges in clinical data integration? Common challenges include data inconsistency, lack of standardization, manual reconciliation, and difficulty preparing data for analytics and monitoring systems. This helps: Featured snippets Voice search AI answers Article by: .profile-image img{ width: 200px !important; height: 200px !important }

10 Essential dashboards for every pharma R&D portfolio management PMO

Well-designed data analytics in project management is no longer a reporting convenience. It is a strategic control tower for project portfolio performance. In complex organizations, where capital allocation, resource constraints, and regulatory pressures intersect, decision velocity depends on real-time visibility. Without a structured PMO project tracking dashboard, portfolio discussions rely on fragmented spreadsheets, static presentations, and subjective updates.For PMO leaders and senior project professionals, the question is not whether to implement a dashboard, but how to design one that scales governance and enables strategic alignment. A mature PMO dashboard connects execution data to business outcomes, making portfolio trade-offs transparent and defensible. It transforms status reporting into performance management.What is a PMO dashboard?A PMO dashboard is a structured, visual decision-support layer that consolidates project, financial, risk, and resource data into actionable portfolio insights.In a pharma R&D context, a dashboard must:Integrate clinical, financial, and strategic dataReflect phase-gated development realitiesIncorporate Probability of Technical and Regulatory Success (PTRS)Present risk-adjusted financial metrics (e.g., rNPV)Enable scenario modelingUnlike traditional project dashboards focused on schedule and budget, an R&D PMO dashboard must support questions such as:Where should we allocate the next $100M?Which asset creates concentration risk?What is the impact of accelerating Phase II by 6 months?Are we over-indexed in early-stage assets?Are we realizing the intended benefits?Operational dashboards focus on day-to-day execution. They highlight schedule variance, cost variance, milestone adherence, issue logs, and resource utilization. These views are often used by project managers and program leads to monitor delivery discipline.Executive-level dashboards operate at different altitudes. They aggregate portfolio-level KPIs, investment distribution, strategic alignment scores, benefit realization trends, and risk exposure summaries. Their purpose is not task tracking, but investment governance and performance oversight.A robust PMO dashboard integrates data from Project Portfolio Management (PPM) systems, financial systems, timesheets, and risk registers. The outcome is a single source of truth for portfolio performance, enabling consistent conversations across leadership forums.The 10 important pharma dashboards Every PMO in life sciences should haveThe following dashboards were selected based on three criteria: executive relevance, R&D specificity, and measurability. Each supports distinct governance decisions and should be integrated into a coherent portfolio reporting framework.Portfolio Investment Allocation DashboardObjective: The Portfolio Investment Allocation Dashboard helps ensure that financial and strategic resources are distributed optimally across a pharma R&D portfolio. It enables the PMO and executives to monitor whether investments align with therapeutic priorities, risk appetite, and long-term pipeline strategy. By visualizing capital allocation across modalities, phases, and internal versus partnered assets, it highlights areas of over- or under-investment. This dashboard also supports scenario-based reallocation decisions to maximize portfolio value while managing concentration risk.Key KPIs to Monitor:Budget vs. Actual Spend by therapeutic area, development phase, and modalityAllocation Across Phases (Discovery → Phase I–III → Regulatory)Internal vs. Partnered Asset Investment RatioRisk-Adjusted Investment Distribution (e.g., rNPV-weighted allocation)Concentration Risk Index (exposure to top 3–5 assets) 2. Clinical Milestone Performance DashboardObjective: The Clinical Milestone Performance Dashboard tracks the operational execution of clinical trials to ensure that studies progress on schedule and within defined parameters. It provides visibility into enrollment, site activation, and critical path timelines, helping PMOs identify delays or bottlenecks early. By monitoring milestone adherence across the portfolio, it allows leaders to assess program predictability and take corrective actions to avoid downstream impacts on time-to-market and overall portfolio value. This dashboard also supports scenario analysis for risk mitigation and resource planning in ongoing trials.Key KPIs to Monitor:Enrollment Velocity vs. Planned Targets (patients recruited per site over time)First Patient In (FPI) / Last Patient Out (LPO) VarianceSite Activation Timelines (planned vs. actual)Critical Path Slippage (deviation from projected milestones)Trial Completion Probability or PTRS-based Milestone Status3. Resource Capacity & Utilization DashboardObjective: The Resource Capacity & Utilization Dashboard helps PMOs ensure that human and operational resources are optimally allocated across the R&D portfolio. It provides visibility into functional workloads, skill availability, and potential bottlenecks, enabling proactive adjustments before delays occur. By monitoring both internal teams and outsourced resources, the dashboard ensures projects are adequately staffed and aligned with strategic priorities. It also supports scenario planning, helping executives assess the impact of shifting resources between high-value or time-sensitive projects.Key KPIs to Monitor:FTE Demand vs. Capacity by function (clinical operations, biostatistics, regulatory, etc.)Resource Utilization Rate (percentage of available hours allocated to active projects)Skill Gap Heatmaps (identifying critical resource shortages)Outsourcing vs. Internal Resource MixOvercommitment Index (projects exceeding available capacity)4. Risk and Issue Management DashboardObjective: The Risk and Issue Management Dashboard provide a centralized view of potential and ongoing risks across the R&D portfolio, helping PMOs proactively identify, assess, and mitigate threats to project timelines, costs, and outcomes. It tracks both the probability and impact of risks, enabling teams to prioritize critical issues and take corrective actions before they affect portfolio performance. By monitoring issues in real time, the dashboard supports transparency, accountability, and strategic decision-making across projects and therapeutic areas. It also helps in evaluating the effectiveness of mitigation plans and risk response strategies.Key KPIs to Monitor:Number of Active Risks and Issues by project, phase, and therapeutic areaRisk Severity and Probability Scores (high, medium, low)Open vs. Closed Issue Count and Resolution TimeMitigation Plan Effectiveness (percentage of risks with approved and executed mitigation strategies)Risk Exposure Trend (portfolio-level view of risk concentration over time)5. Portfolio cost dashboardObjective: The Portfolio Cost Dashboard provides a consolidated view of total R&D spend across the portfolio, enabling PMOs to monitor financial performance at both asset and portfolio levels. It helps ensure that actual spending aligns with approved budgets and long-term financial plans, while highlighting cost overruns or inefficiencies early. By tracking spend across phases, therapeutic areas, and programs, the dashboard supports better financial governance and prioritization decisions. It also enables forward-looking cost forecasting, helping leadership anticipate funding needs and optimize capital allocation.Key KPIs to Monitor:Total Portfolio Spend vs. Approved BudgetCost Variance by asset, phase, and therapeutic areaEstimate at Completion (EAC) vs. Baseline BudgetCost per Phase / Cost per AssetBurn Rate Trends (monthly/quarterly spend velocity)6. Risk-Adjusted Value DashboardObjective: The Risk-Adjusted Value Dashboard enables PMOs and leadership to evaluate the true economic potential of the R&D portfolio by incorporating uncertainty into financial projections. Instead of relying on deterministic forecasts, it applies to probability factors, such as Probability of Technical and Regulatory Success (PTRS) to estimate expected value. This allows decision-makers to compare assets on a like-for-like basis, prioritize high-value programs, and identify concentration risks within the portfolioKey KPIs to Monitor:Risk-Adjusted Net Present Value (rNPV)PTRS (Probability of Technical & Regulatory Success)Expected Commercial Value Value Concentration IndexSensitivity Analysis Outputs7. Scenario Planning & What-If DashboardObjective: The Scenario Planning & What-If Dashboard enables PMOs and executives to evaluate the impact of strategic decisions before committing resources. It allows users to simulate changes, such as accelerating or delaying assets, reallocating budgets, or adjusting resource capacity, and understand how these shifts affect portfolio value, timelines, and risk exposure. By integrating financial, clinical, and resource variables, the dashboard supports data-driven trade-off discussions during governance reviews. It also helps organizations prepare for uncertainty by comparing multiple future scenarios and identifying the most resilient portfolio strategy.Key KPIs to Monitor:Portfolio Value by Scenario Impact of Acceleration/Delay on Time-to-MarketBudget Reallocation EffectsResource Rebalancing OutcomesSensitivity Analysis Results8. Regulatory Dossier Readiness DashboardObjective: The Regulatory Dossier Readiness Dashboard provides a comprehensive view of how prepared an asset is for regulatory submission. It helps PMOs and regulatory teams track the completeness, quality, and timeliness of all required modules and supporting documentation. By highlighting gaps, dependencies, and risks in submission readiness, the dashboard enables proactive issue resolution and ensures alignment with planned submission timelines. It also supports cross-functional coordination across clinical, CMC, and regulatory teams to avoid last-minute delays.Key KPIs to Monitor:Dossier Completeness Submission Readiness Status (on-track, at-risk, delayed)Document Approval Cycle TimeNumber of Open Gaps/Deficiencies prior to submissionRegulatory Milestone Adherence (planned vs. actual submission timelines)9. External Innovation & Alliance DashboardObjective: The External Innovation & Alliance Dashboard provides visibility into the performance and contribution of partnered or in-licensed assets within the R&D portfolio. It helps PMOs and leadership monitor how collaborations, licensing deals, and strategic partnerships are delivering value against expectations. By tracking milestone achievements, financial commitments, and asset progression, the dashboard ensures that external innovation aligns with portfolio priorities. It also supports risk management by identifying underperforming partnerships or potential delays in externally sourced assets.Key KPIs to Monitor:In-Licensed vs. Internal Asset Performance (progression through phases, clinical success)Deal Milestone Payments vs. ForecastPartnership Milestone Adherence (timelines for option exercises, research deliverables)Return on Collaboration Investment (financial and strategic impact)Pipeline Contribution from External Sources (percentage of portfolio value or assets)10. Resource demand and capacity planning dashboardThe Resource Demand & Capacity Planning Dashboard enables PMOs to forecast, balance, and optimize resource needs across the R&D portfolio over time. It provides forward-looking visibility into demand generated by planned and ongoing studies versus available capacity across key functions such as clinical operations, data management, biostatistics, and regulatory. By identifying future gaps or surpluses early, the dashboard supports proactive hiring, outsourcing, or reprioritization decisions. It also plays a critical role in scenario planning by showing how pipeline changes impact resource requirements and delivery timelines.Key KPIs to Monitor:Forecasted Resource Demand vs. Available CapacityCapacity Gap/SurplusResource Demand by Pipeline Scenario Hiring & Onboarding Lead Time vs. Demand Needs Planned vs. Actual Resource Fulfillment RateFrequently Asked Questions (FAQs) PMO dashboard .faq-wrapper { max-width: 850px; margin: 20px auto; font-family: 'Open Sans', sans-serif; } .faq-item { border-bottom: 1px solid #e0e0e0; padding: 10px 0; } .faq-item summary { font-family: 'Montserrat', sans-serif; font-size: 18px; font-weight: 600; cursor: pointer; list-style: none; position: relative; padding-right: 30px; } /* Remove default marker */ .faq-item summary::-webkit-details-marker { display: none; } /* Down arrow (closed state) */ .faq-item summary::after { content: "▼"; position: absolute; right: 0; top: 0; font-size: 16px; transition: transform 0.3s ease; } /* Up arrow (open state) */ .faq-item[open] summary::after { content: "▲"; } .faq-item p { margin-top: 12px; font-family: 'Open Sans', sans-serif; font-size: 17px; line-height: 1.7; color: #272727; } 1. What is a PMO dashboard in project portfolio management? A PMO dashboard is a centralized, visual decision-support system that consolidates project, financial, risk, and resource data into actionable portfolio insights. 2. Why are PMO dashboards important in pharma R&D? PMO dashboards enable real-time visibility, faster decision-making, and better capital allocation in complex, regulated R&D environments. 3. What are the key types of pharma PMO dashboards? Key dashboards include Portfolio Investment, Clinical Milestone Performance, Resource Capacity, Risk & Issue Management, Cost Tracking, Scenario Planning, and Regulatory Readiness dashboards.